NVIDIA’s GPU backlog has crossed 500B and continues stretching deep into 2026 with no signs of slowing. Because the world is pulling compute faster than manufacturing can replenish it, even with record shipments moving out the door. But the backlog is only the surface. The deeper story lives in how companies are responding to the pressure by rethinking how intelligence moves through their systems.

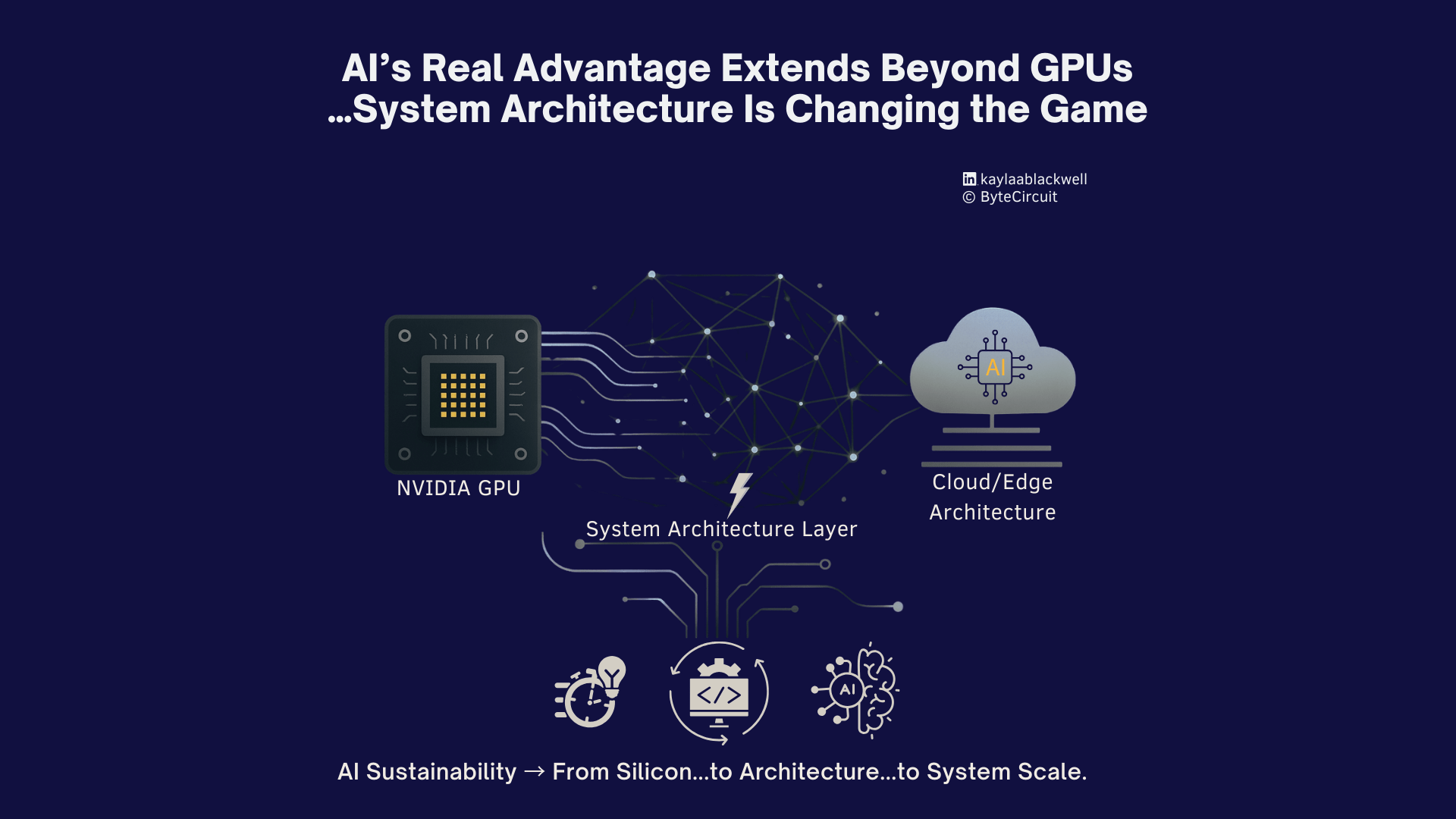

A quiet architectural shift is happening across the ecosystem as the industry begins to redesign how intelligence moves…and it is already reshaping how the next decade of AI will be built.

NVIDIA’s roadmap reflects the same direction.

Blackwell was designed to shrink memory bottlenecks and reduce the energy footprint of inference, and the upcoming Rubin architecture goes even further by tightening the integration between compute, memory, and network bandwidth. These aren’t just faster chips…they are signs that the future of scaling depends on eliminating friction in the system around the GPU, not only increasing GPU strength itself.

And since pressure in AI now lives inside the architecture that surrounds the silicon…placement, data movement, orchestration, power, memory, and scheduling. These layers shape how far systems can scale and determine how far a GPU investment can stretch.

1. More Signals From the Industry

Across the major players, worthwhile patterns are emerging tied to the Triple Bottom Line…people, planet, profit.

Amazon’s Project Greenland surfaced a new direction for AI efficiency.

They are allocating GPU clusters with more intention…reducing idle compute…rebalancing workloads across regions to improve placement decisions…balancing energy patterns…and shrinking time-to-serve for internal and external users. Greenland treats orchestration and power as design constraints, not afterthoughts.

OpenAI has been strengthening the software layers around the hardware.

Their recent gains have come from better batching, smarter inference pipelines, tighter memory planning, more efficient scheduling, and architectural optimizations that reduce friction across the compute fabric.

Red Hat is pushing hybrid AI into production reality.

Using OpenShift AI and distributed container platforms, they are helping teams run ML closer to where data originates…keeping performance high without relying solely on massive central clusters.

IBM is rethinking workload placement through hybrid cloud and Watsonx.

Their research shows that aligning compute with data gravity and energy patterns leads to faster, cleaner, more resilient systems. It is a reminder that efficiency comes from running the right workload in the right environment…reducing unnecessary data movement…improving energy alignment…and optimizing compute through orchestration instead of brute expansion.

All of these moves point to the same truth.

Teams are beginning to use compute with more intention.

2. The Shift Shaping the Industry

Intentional AI systems are now being designed around intelligence flow.

Teams are focusing on:

- smarter job scheduling

- reducing idle compute

- aligning workloads with energy patterns

- improving memory footprints

- placing inference where it fits

- shrinking unnecessary data movement

- strengthening orchestration instead of inflating infrastructure

These decisions rarely make headlines. But this is the work that will sustain the future of AI.

What Companies Are Learning

Across cloud, utilities, telecom, manufacturing, and enterprise environments similar learnings are emerging.

Efficiency is becoming a strategic capability

Teams that optimize compute placement and streamline their pipelines are outpacing teams that only expand capacity.

Orchestration is becoming a design layer

Schedulers, placement engines, and inference routers are becoming central to performance architecture.

Energy is shaping planning

Power is becoming part of architecture planning, part of cost modeling, and part of long-term sustainability strategy.

Workloads are being aligned with reality

Not everything belongs in the cloud.

Workloads are moving where they fit best…edge, core, on-prem, hybrid, federated, cloud, or multi-cloud…based on latency, cost, physics, and data gravity.

More compute is becoming the last option

Teams are solving with design first before solving with hardware.

💡 The Bigger Picture

AI’s growth sits on top of architecture.

Energy…memory…data paths…placement…scheduling…orchestration.

These layers define how far intelligence can stretch.

Amazon’s Greenland initiative…OpenAI’s efficiency improvements…Red Hat’s hybrid AI approach…IBM’s placement intelligence…and NVIDIA’s evolving hardware plus software stack are surfacing a shared message.

We are entering a period where thoughtful intelligence carries more weight than raw scale. And the architectural choices being made now will shape how far this industry can go.

And while the Triple Bottom Line still matters…people, planet, profit…it only works when progress includes the humans doing the work.

So, this moment is also a reminder that efficiency and responsibility have to rise together.

💬❓ As you think about your next AI or distributed system design…where can architecture do more of the lifting before you reach for more compute?

🧩 Follow me, Kaylaa T. Blackwell and subscribe to ByteCircuit for more tech breakdowns that help you connect the dots.